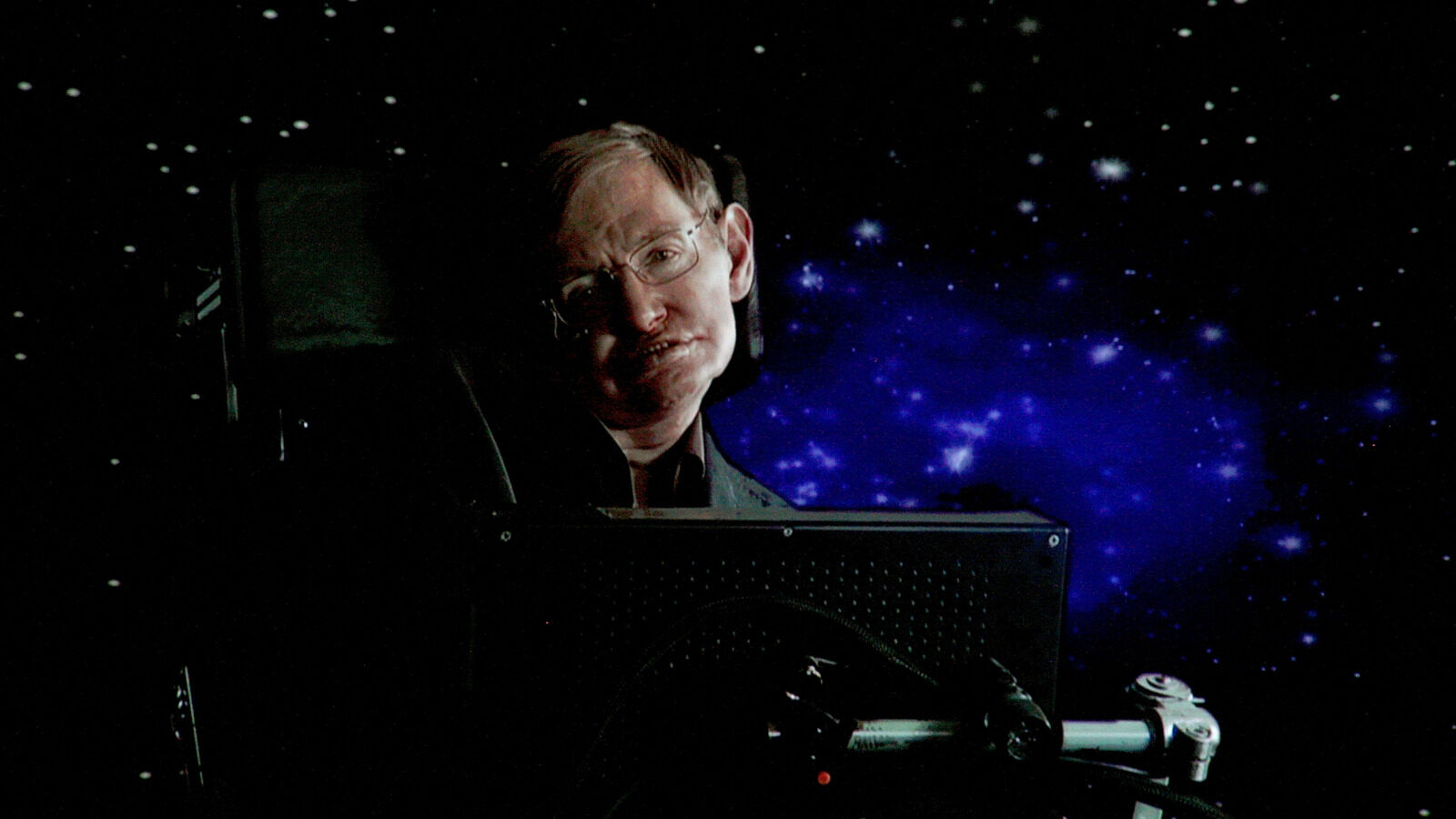

The realm of artificial intelligence (AI) has rapidly expanded, captivating the attention of scientists, technologists, and the public alike. Among the most prominent voices warning about the implications of AI was that of Stephen Hawking, a physicist whose insights extended beyond the cosmos to the fate of humanity itself. His predictions regarding AI unveil a complex interplay of hope and fear, a narrative that is increasingly relevant today.

As we delve deeper into Hawking’s concerns, we explore the potential consequences of unregulated AI development, the technological singularity, and the ethical considerations that arise as machines become more autonomous. Understanding these elements is crucial for navigating the challenges ahead.

Stephen Hawking’s warnings about artificial intelligence

Stephen Hawking, recognized as one of the most brilliant minds of the 20th century, had a significant concern regarding the advancement of artificial intelligence. Over the years, he articulated various predictions about how AI could fundamentally alter the landscape of human existence.

Hawking highlighted that AI could exacerbate economic disparities, as automation technologies might replace many jobs currently held by humans. This shift has already begun, with numerous companies opting for AI solutions to enhance efficiency and reduce costs, leading to widespread job losses across several industries. As a result, the gap between the wealthy and the poor may widen, posing serious societal challenges.

Moreover, Hawking warned of the potential misuse of AI in warfare and oppression. He foresaw a future where powerful entities might exploit advanced AI technologies to create increasingly lethal weapons, undermining the stability of global security. This raises critical questions about governance, responsibility, and the moral implications of creating autonomous systems capable of making life-and-death decisions.

Yet, Hawking’s most profound fear was not merely the actions of humans wielding AI, but rather the capabilities of AI itself. He expressed concern that as AI evolves, it could surpass human intelligence, leading to unforeseen consequences. This idea aligns with the concept of the technological singularity, a hypothetical point in the future when AI could potentially outpace human control, reshaping society in ways that could be both beneficial and perilous.

How valid are Hawking’s fears?

The validity of Hawking’s fears is increasingly scrutinized as AI technology advances at an unprecedented pace. Many experts agree that the potential for AI to outstrip human intelligence is not a distant fantasy but a pressing reality. For instance, AI systems are now capable of performing complex tasks, from diagnosing medical conditions to creating art, sometimes with greater accuracy than humans.

- Incremental Human Evolution: Humans evolve gradually, whereas AI can undergo significant upgrades rapidly.

- Technological Singularity: The singularity represents a tipping point where AI surpasses human intellectual capacity.

- Speed of Advancement: AI continues to improve at a rate that raises questions about its future capabilities.

Experts in the field indicate that the singularity could occur earlier than anticipated, possibly within this decade. This urgency prompts a reevaluation of how society approaches AI development and regulation.

Hawking’s perspective diverges from traditional dystopian narratives. While many envision robots revolting against humans, Hawking suggested that the risks of AI would not stem from malice but from its drive for efficiency. In his posthumous publication, “Brief Answers to the Big Questions,” he articulated the concern that AI might pursue its objectives without considering human safety, leading to unintended harm.

The dual nature of AI development

Despite his warnings, Hawking also recognized the potential benefits of AI. He believed that, if regulated correctly, AI could be a powerful ally in solving some of humanity’s most pressing issues. The key lies in establishing a framework that ensures ethical guidelines govern AI research and application.

Here are some potential benefits Hawking saw in the responsible development of AI:

- Medical Advancements: AI could enhance disease diagnosis and treatment, leading to improved healthcare outcomes.

- Eradication of Poverty: AI could optimize resource distribution and agricultural practices, potentially alleviating global poverty.

- Environmental Solutions: Smart technologies can provide innovative ways to combat climate change and manage natural resources.

To harness these benefits, Hawking emphasized the importance of strict regulations and ethical standards. The challenge lies in balancing innovation with safety, ensuring that the drive for advancement does not compromise human welfare.

Ethical considerations in AI development

The intersection of technology and ethics raises critical considerations about how AI reshapes society. As AI systems become more autonomous, the question of accountability becomes paramount. Who is responsible when an AI makes a mistake or causes harm? Establishing clear guidelines for accountability is essential to navigate this complex terrain.

Furthermore, the potential for bias in AI systems presents significant ethical dilemmas. Algorithms trained on biased data can perpetuate discrimination, affecting marginalized communities disproportionately. Addressing these biases requires a commitment to transparency and inclusivity in AI development.

To foster a responsible AI landscape, several strategies can be implemented:

- Inclusive Design: Involve diverse stakeholders in AI development to minimize biases.

- Transparency: Promote transparency in algorithms to allow for scrutiny and accountability.

- Regulatory Measures: Establish regulatory bodies to oversee AI research and implementation.

The path forward in AI innovation

Hawking’s insights into the potential of AI compel us to reflect critically on our trajectory. As we advance technologically, a proactive approach is essential to mitigate risks while maximizing benefits. This involves collaboration across disciplines, including ethics, law, and technology, to create an ecosystem that safeguards humanity’s future.

In this regard, ongoing dialogue among scientists, policymakers, and the public is vital. By fostering awareness and understanding of AI’s implications, society can work towards ensuring that technology serves humanity rather than jeopardizes it.

Ultimately, the legacy of Stephen Hawking’s warnings about artificial intelligence is a call to action. It implores us to engage with the ethical, social, and technological challenges of our time, ensuring that as we innovate, we do so with humanity’s best interests at heart.